Why Every Ad Is a Lesson (Not a Winner): How to Improve Ad Performance

Not every UGC ad will be a winner - and that’s the point. Here’s how to turn underperforming ads into better decisions, stronger creative, and more effective UGC strategies.

Not every UGC ad will be a winner - and that’s the point. Here’s how to turn underperforming ads into better decisions, stronger creative, and more effective UGC strategies.

)%20(1)%20(1).png)

Not every ad will perform. That’s just part of it.

Some will take off straight away. Others will barely get off the ground. And it’s easy to label those as “bad ads” or blame the creator and move on quickly. But that usually means missing the point.

Because every ad, good or bad, leaves something behind. A signal. A pattern. A clue.

If you treat ads as feedback instead of wins or losses, you start improving faster, especially when working with high volumes of UGC video ads.

When an ad underperforms, it’s usually pointing to something specific.

It might be:

Or something more subtle, like pacing or tone.

You might see:

Looking at different UGC ad examples over time helps you spot these patterns more quickly.

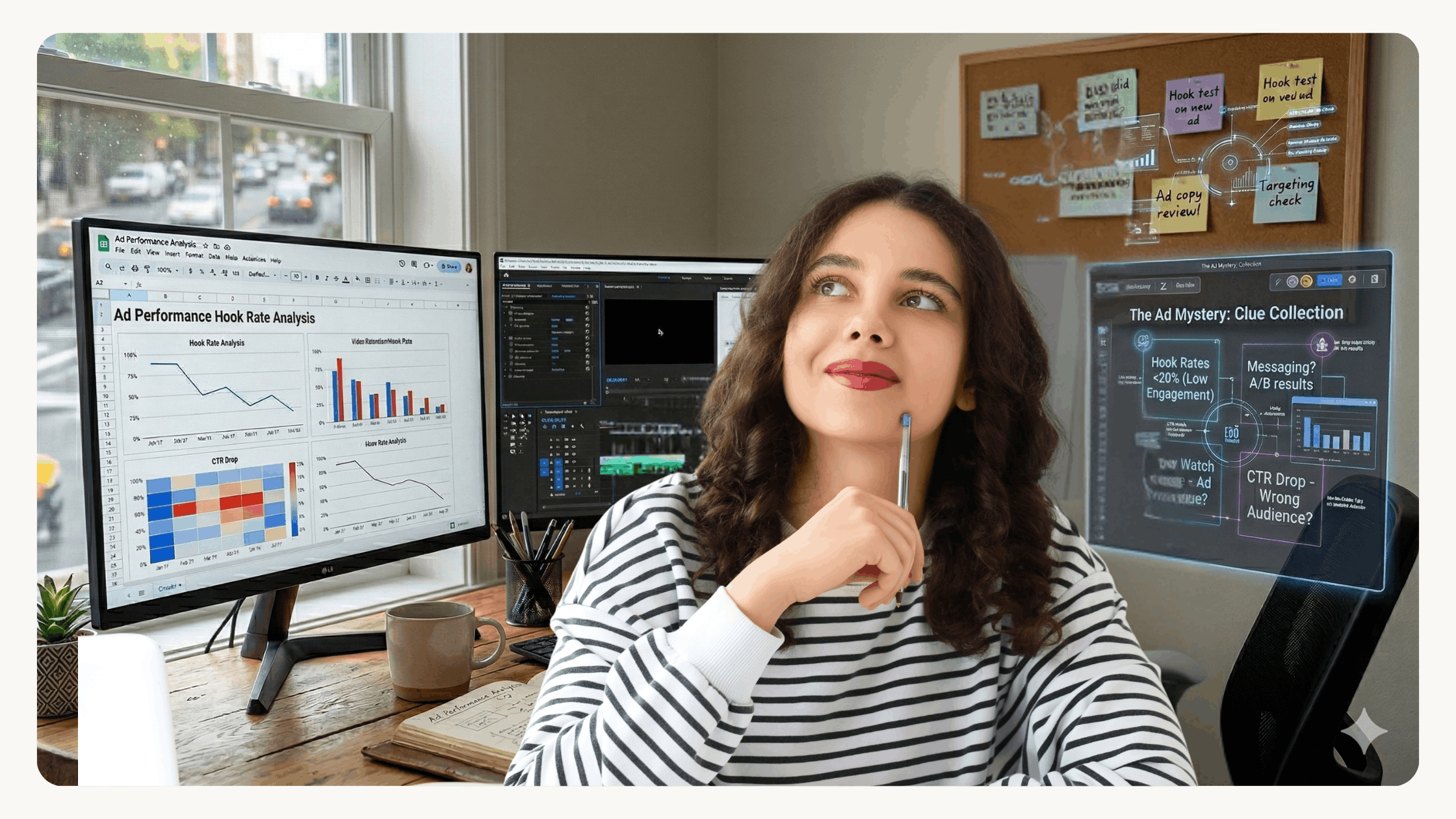

Once you start reading performance like this, testing becomes much more intentional.

Not always. It’s easy to blame the content first, but performance doesn’t sit in one place.

Sometimes the ad is doing its job perfectly:

But then something else breaks.

It could be:

That drop-off gets blamed on the ad, when actually the issue sits further down the funnel.

Creators should not be blamed for an unsuccessful ad - especially if they followed the brief carefully.

One piece of content not performing doesn’t mean the creator is the problem.

Every creator brings a different style, tone, and way of presenting a product. That variation is exactly what makes working with UGC creators valuable.

Not every angle will land. Not every delivery will resonate.

That’s expected.

What matters is:

If you treat creators as part of a testing process, not a one-shot outcome, you get far more value from every collaboration.

Even low-performing ads usually contain something worth keeping.

It might be:

Over time, these small wins build up. Instead of starting from scratch every time, you’re building on what you’ve already learned.

This is how stronger content emerges, especially when you have the setup to keep testing consistently.

The goal isn’t to get every ad right.

The goal is to:

That might mean testing:

Over time, those small tests stack up.

This is where having the right setup matters. Teams using a structured UGC workspace can test faster because sourcing, briefing, and delivery are already in place.

Instead of waiting weeks for new content, they can respond to performance in real time.

The brands that see consistent results aren’t relying on one standout piece of content.

They’re building a system:

That’s what allows them to stay consistent, even when individual ads don’t land.

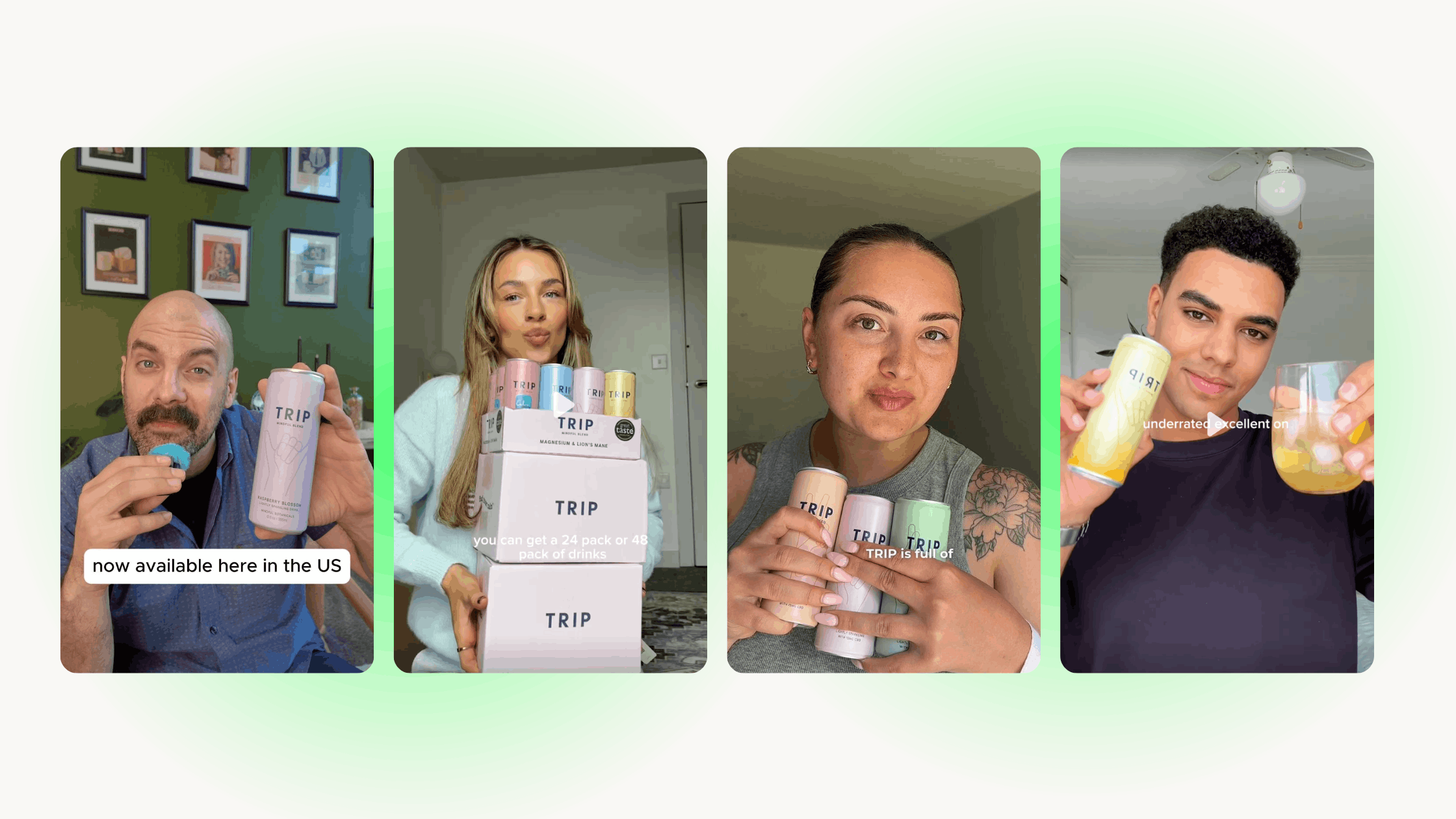

When you’re working with different UGC creators, this becomes easier to maintain. You get a steady flow of content, each bringing a slightly different angle.

That variation is what fuels better decisions.

UGC is naturally iterative.

Different creators, hooks, and formats will all perform differently. That’s not a flaw, it’s the advantage.

Not every ad needs to win - it just needs to contribute. When you approach it like that, performance becomes more consistent over time. That’s how UGC video ads improve. Not from chasing one perfect ad, but from learning across many.

Once you stop looking for winners and start looking for signals, things get clearer.

You’re not reacting to results. You’re reading them.

You start to:

That’s what moves things forward.

If there’s one shift to make, it’s this.

Stop asking if an ad worked.

Start asking what it showed you.

Because when every ad becomes a lesson, improvement becomes part of the process.

And that’s how better UGC video ads are built - supported by the right creators, the right systems, and a setup that lets you keep testing without slowing down.